The classics are so well-known they’ve become punchlines: acai berry treatments, one simple trick to get rid of belly fat, get rich working from home. Newer scam ad verticals like bitcoin and crypto schemes, home solar energy savings, and nutritional supplements for diabetes sufferers are slamming consumers on every corner of the Internet.

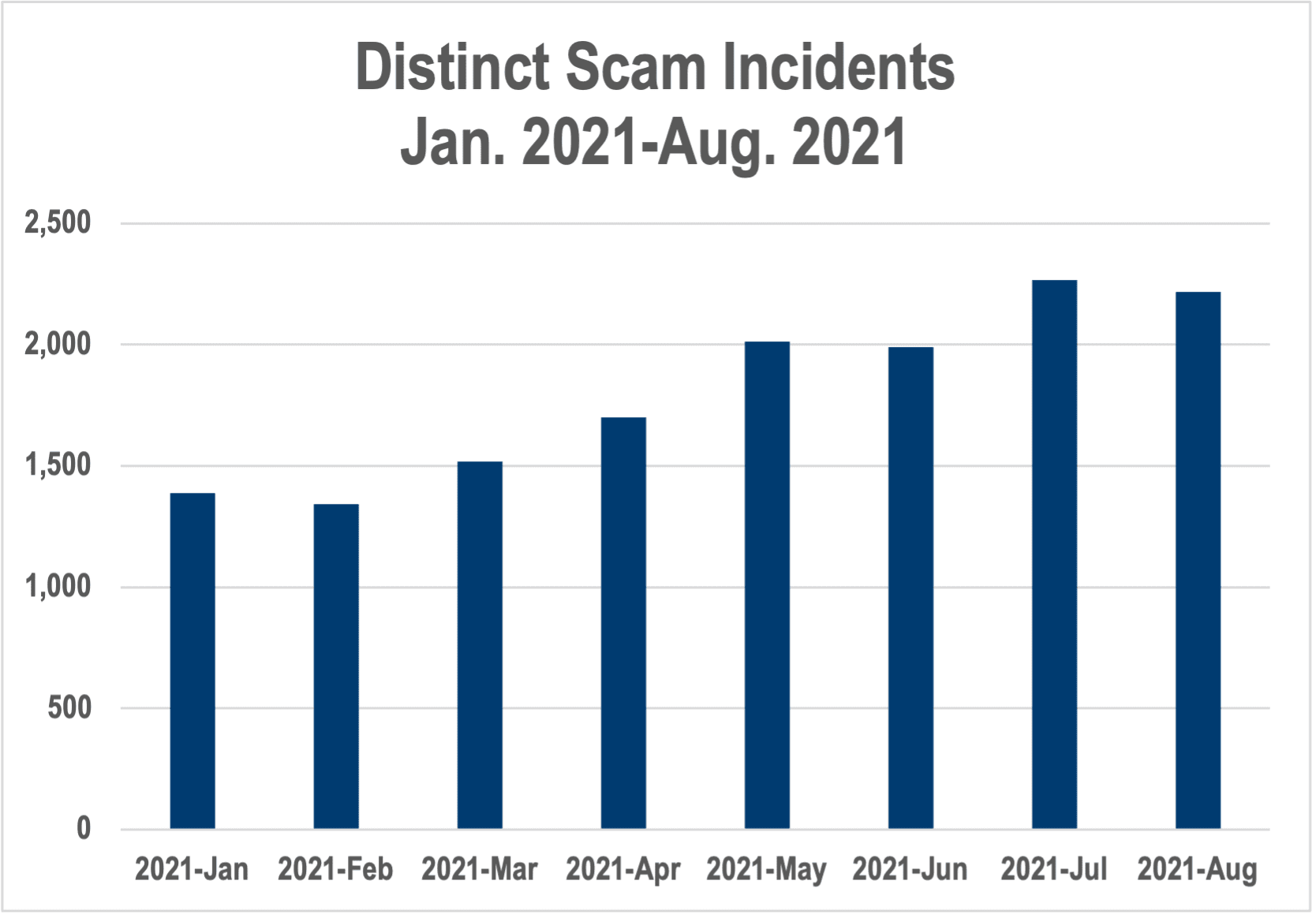

And yet scam and deceptive advertising is simply accepted as an ugly part of digital media and advertising. And it’s only getting uglier. Since the beginning of 2021, The Media Trust has detected a 50% increase in scam campaigns hitting publisher properties. Still, too many AdTech companies and publishers look the other way as the scams roll through the programmatic pipes, hoping their audiences have the good sense not to be bamboozled.

Figure 1: Scam incidents (which can account for thousands of impressions) have jumped 35% since the beginning of 2021

Unfortunately, there have been virtually no consequences for sites running scam ads. There’s the occasional massive fine, like when the Federal Trade Commission came down hard on Clickbooth for its acai berry barrage. For the most part, scammers advertise with impunity and AdTech and publishers become their accomplices.

However, consequences may be coming—and the fallout may be dire—judging by the developing online regulatory situation in the UK and increasing attention elsewhere.

The scope of online safety measures

British Prime Minister Boris Johnson has promised to present the Online Safety Bill before Christmas. The bill would require publishers, social networks, and many communication/messaging apps to deter, remove, and mitigate the spread of Illegal and harmful content—particularly when aimed at children. The bill threatens fines as high as £18 million or 10% of global revenue, and possible criminal sanctions.

Beyond content that sexually exploits children (which must be reported to law enforcement), the harmful content in question includes malicious trolling and racist abuse—with the added goal of “protect[ing] democracy,” presumably through stemming online disinformation. The UK Office of Communications (Ofcom) will enforce the proposed law, which will also give the regulatory agency the ability to completely block access to a site or platform.

However, advocates like famed British personal finance advisor Martin Lewis think the bill doesn’t go far enough. That’s because it’s laser-focused on user-generated content and doesn’t regulate online advertising—most notably scams. Lewis, whose likeness is often exploited in scam ads pushing bitcoin schemes, has been on a crusade against online scam ads for years, including forcing Facebook to settle for £3 million over a 2018 lawsuit regarding more than 1,000 scam ads featuring his appearance.

Drowning in scam ads

The data backs up Lewis’ claim that the “The UK is facing an epidemic of scam adverts.” In 2020, 410,000 cases of fraud reported to the UK police represented a 31% jump from the year prior, according to consumer group Which?, with £2.3 billion fleeced. Including anxiety and psychological damage, Which? puts the actual total suffered by UK consumers at £9 billion.

And the greatest frustration among consumers and public advocacy groups is the lack of recourse and sense that scammers act with impunity—aided by AdTech and digital media. In another Which? report, 34% of consumers said a scam they reported to Google was not taken down, while 26% said the same of Facebook.

In 2020, The National Cyber Security Centre removed more than 730,000 websites hosting scam advertising landing pages featuring the likenesses of Lewis, Richard Branson, and other celebrities. Despite that effort, The Media Trust has seen a 22% increase in these types of scam (aka “Fizzcore”) content throughout 2021 that use similar landing pages.

Fizzcore is more nefarious than other scams because it employs cloaking to hide malicious creative and/or landing pages from creative audits and other detection techniques. The vast majority of these have been pushing bitcoin investment scams, often with the same Lewis and Branson content.

Even if online scam advertising fails to make the final Online Safety Bill, a reckoning for scam ads could come in other forms. The UK’s Department for Digital, Media, Culture and Sport (DMCS) is developing the Online Advertising Programme (OAP), a framework that enables regulators to address potential consumer harms from digital advertising, including scam ads.

And just to pile on, UK. Home Secretary Priti Patel announced a relaunched Joint Fraud Taskforce on Oct. 21. Addressing the significant rise in scams during the peak of the coronavirus pandemic, the taskforce will focus on addressing scams and fraud through private-public partnerships and refurbishing of government reporting tools.

Fallout beyond the British Isles

The ramifications of all this regulatory (buildup) ought to make the industry anxious. The Online Safety Bill would put heavy new burdens on publishers and social media in moderating user content in the UK, but the inclusion of scam ads might directly affect revenue. In the absolute worst-case scenario, publishers would need to vet all specific advertisers running on their sites as well as be familiar with all creative to avoid liability. That could mean many risk-averse publishers shut off programmatic advertising.

Scam advertisers are notoriously hard to pinpoint. They use any and every buying platform available, and then tools like cloaking in code to hide their malicious motives. When it comes to rooting out scam campaigns, the proof isn’t completely in the ad code. While creative and domain patterns can be identified and blocklisted, finding scammers also requires investigation into the elusive end-advertisers, their motives, and their histories. It’s not impossible, but it requires dedicated teams always on the pursuit.

Beyond stopping scammers cold at the source, the next best way to stem proliferation of scam ads is to bring culpability to publishers and their AdTech partners. However, publishers are the low-hanging fruit. The website was where the consumer was attacked, so they’ll always be the prime regulatory target.

Despite the intense pressure in the U,K., regulators in other countries are also most definitely paying attention and looking for a potential roadmap. In the U.S., reform of Section 230 of the Communications Decency Act—which shields online media companies from legal liability regarding user-generated content—appeals to both major political parties. Really, what politician would say no to the easy win of protecting consumers from online scams? According to the Federal Trade Commission, consumers lost $3.3 billion to fraud in 2020, up from $1.8 billion in 2019—and that’s only the 2.2 million reports filed.

Self-regulation to the rescue?

The IAB UK is conversing with the DMCS on the OAP, but ultimately the trade group believes industry self-regulation is the answer. While self-regulation on the data privacy front became a mockery of itself, self-regulation of scam ads doesn’t need to meet the same fate. First off, trade groups like the IAB need to establish stronger ad quality guidelines that offer standards for handling malvertising, scam, and other harmful ads.

In addition—or short of that—publishers need to take control of their own destinies regarding scam ads. Every ad quality provider should be identifying scam ads and enabling publishers to block them. Slapping down redirects simply isn’t enough for a bad-ad-blocker—a publisher serving scam ads is violating the trust of its audience and leaving consumers vulnerable.

Not only is blocking the scam ads the right thing for publishers to do, it is a way to get ahead of—or perhaps helping avoid—a regulatory onslaught that will have catastrophic revenue consequences. Failing to mitigate will come back to haunt the industry.